Websitebeveiliging & NIS2-analyse

Verklein cyberrisico's met geautomatiseerde scans van websites, domeinen en e-mailinfrastructuur.

Unified intelligence analysis for fraud detection, entity correlation and cyber risk analysis.

XCOM.DEV is een Nederlands technologie-initiatief voor AI-ondersteunde fraudeanalyse, intelligence-analyse en cyber intelligence, gericht op digitale signalen, identiteiten en risicoanalyse.

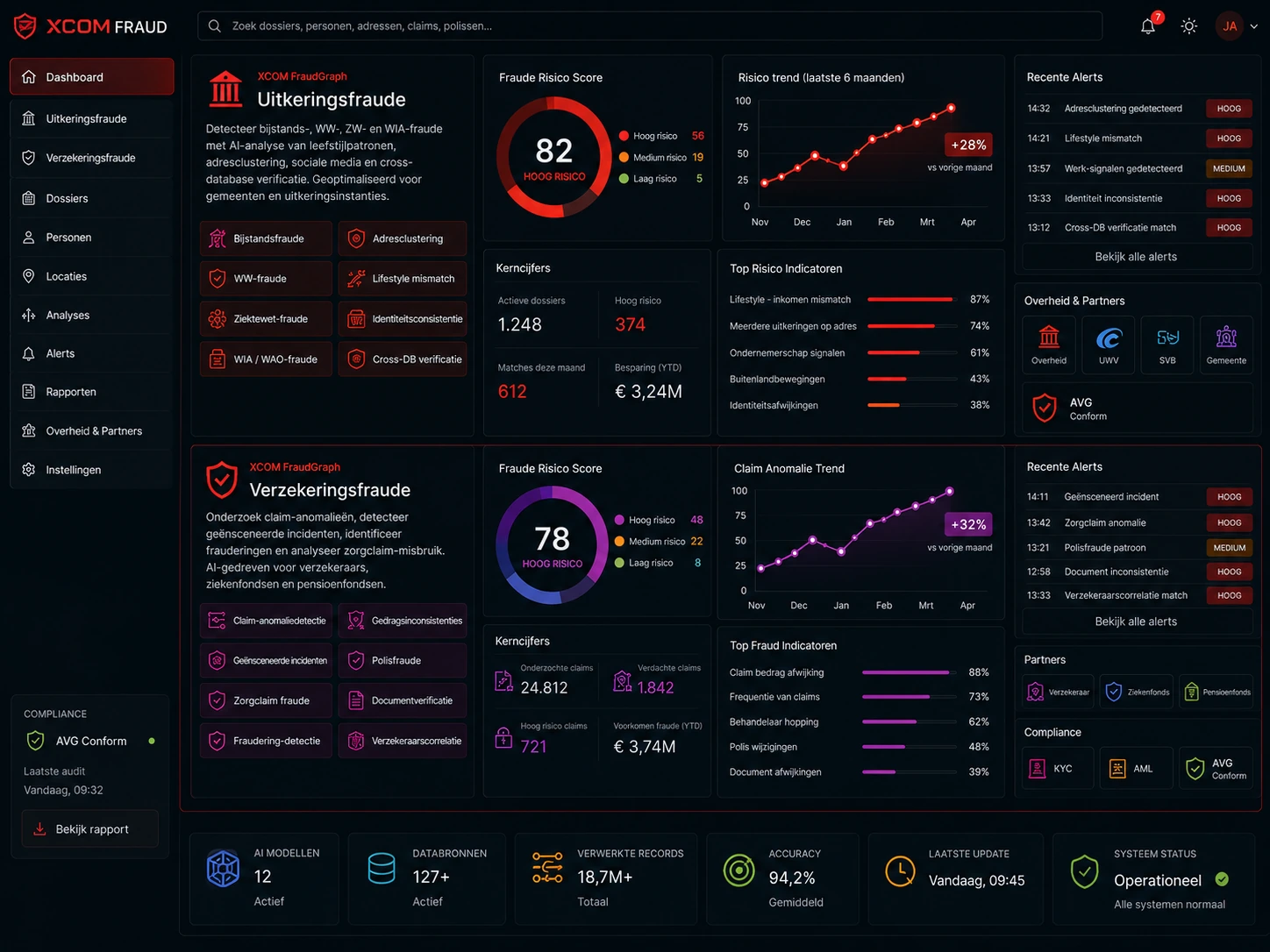

Onderzoeksmodules en intelligence workflows voor fraudeanalyse, identiteitsonderzoek en cyberrisico-detectie.

Data uit websites, domeinen, documenten en open bronnen worden gekoppeld binnen een unified intelligence graph voor analyse, correlatie en risicodetectie.

Elke module is gebouwd voor een specifieke doelgroep en aanvalsvector. Samen vormen ze een modulair intelligence platform voor fraudeanalyse, identiteitsanalyse en cyberrisico-detectie.

Verklein cyberrisico's met geautomatiseerde scans van websites, domeinen en e-mailinfrastructuur.

Analyseer digitale voetafdrukken, aliassen en netwerkverbanden via OSINT en graph intelligence.

Detecteer afwijkende patronen, adresclusters en risicosignalen via AI-gestuurde correlatieanalyse.

XCOM.DEV ontwikkelt technologie voor fraudeanalyse, intelligence-analyse en cyberrisico-detectie. De focus ligt op het sneller zichtbaar maken van signalen, verbanden en risico's binnen complexe datasets en digitale infrastructuren.